Author

Li Yaqi, Research Assistant of CGAIG

In the latest wave of scientific and technological transformation driven by artificial general intelligence (AGI), the integrated capability across compute, research, and data has moved beyond isolated, project-by-project investment and has become part of the underlying structure of national competitiveness as well as a country’s capacity to provide essential public goods. The ability to institutionalize advanced compute, trusted data, and an auditable safety assessment and benchmarking framework into a stable, replicable, and scalable shared infrastructure layer will determine whether a nation can upgrade its research paradigm into national-scale engineering programs oriented toward complex systems.

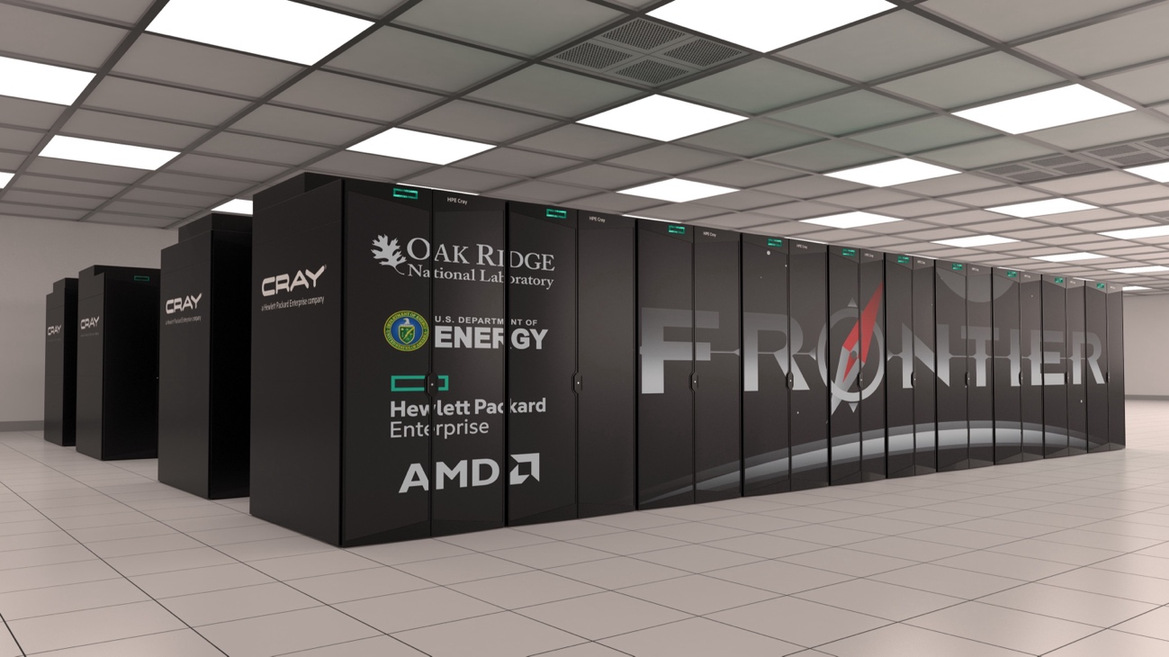

The United States has built such capabilities around the U.S. Department of Energy (DOE) national laboratory system as a central hub, forming a closed loop from strategic objectives to deliverable outcomes through leadership-class supercomputing and dedicated research networks, open-access scientific user facilities, standards and evaluation frameworks, and flagship demonstration programs for critical use cases. By tightly integrating the tradition of big science with modern governance instruments and shaping the innovation order through public infrastructure, this approach offers a practical reference for designing future-facing national AI capabilities.Under the title “Building Foundational Public Infrastructure for AI Compute, Research, and Data: Lessons from the U.S. National Laboratories,” this article provides a systematic review of the institutional logic and implementation mechanisms behind the national laboratory model.