The annual GPU Technology Conference (GTC 2026), held in San Jose, California, from March 16 to 20, 2026, reportedly drew over 30,000 developers from more than 190 countries. The core focus of this year’s conference appeared to pivot from model training toward AI inference and large-scale deployment. In his keynote and subsequent engagements, NVIDIA CEO Jensen Huang outlined a systematic vision regarding industry trends, technical roadmaps, and ecosystem strategy, eliciting multifaceted responses across the technical, industrial, and regulatory sectors.

01 Embracing the “Inflection Point”: A New Narrative

Keynote speeches by Jensen Huang at successive GTC events have consistently attracted significant attention. At GTC 2026, the presentation covered roadmap updates, infrastructure disclosures, and new product launches. Through the presentation of technical data and conceptual frameworks, Huang outlined a new narrative for the AI industry:

Jensen Huang delivers a keynote at GTC 2026

Source: NVIDIA

First, the industry is reaching an “inflection point” as the focus shifts from training to inference. While the iterative training of large models has long been the primary driver of compute consumption, the maturation of generative AI and the proliferation of reasoning models appear to be shifting the anchor of demand toward the inference side. This transition is seen as a signal of a new long-cycle demand for computing power.

Second, as the agentic ecosystem drives a surge in inference requirements, the Token is increasingly regarded as a vital factor of production. Huang introduced the concepts of “Tokenomics” and “AI Factories.” Under physical constraints such as power and land, industry competition may no longer center solely on parameter scale, but rather on token production efficiency and cost advantages. A tiered pricing system based on response speed and processing capacity is expected to emerge. Traditional data centers may transition from storage facilities into “AI factories” that produce tokens and diverse AI services, evolving from cost centers into revenue-generating production systems.

Third, the outlook for a “trillion-dollar” demand was emphasized alongside a full-stack architectural layout. Huang disclosed that cumulative orders for the Blackwell and Vera Rubin architectures, including associated systems, are expected to reach at least $1 trillion by the end of 2027. Reportedly, approximately 60% of these orders originate from major cloud service providers, with the remainder coming from governments, Sovereign AI initiatives, and industrial enterprises. This projection suggests that the industry may remain in a high-investment cycle through 2027, signaling that infrastructure demand has yet to peak.

02 Full-Stack Architecture: The “Five-Layer Cake”

NVIDIA’s announcements at the conference were not limited to hardware upgrades but represented a coordinated strategy across hardware architecture and software ecosystems. The company appears to be positioning itself as a provider of full-stack solutions, spanning from foundational compute to top-level ecosystems.

Jensen Huang presents the next-generation Vera Rubin architecture

Source: NVIDIA

On the hardware side, NVIDIA announced that the new Vera Rubin architecture has entered mass production. The company has also integrated high-performance Language Processing Unit (LPU) solutions from its acquisition of Groq into its product suite, creating heterogeneous compute clusters with GPUs. In this configuration, GPUs handle user input and long contexts due to their memory capacity, while LPUs manage token generation during the decoding phase to minimize latency. Additionally, NVIDIA unveiled the Spectrum X, reportedly the world’s first mass-produced Co-Packaged Optics (CPO) switch, and provided a preview of the Feynman architecture prototype scheduled for 2028.

On the software and ecosystem side, the company introduced NemoClaw, an enterprise-grade agent deployment platform, paired with the OpenShell security sandbox to build a compliance-oriented architecture for autonomous agents. Huang suggested that the software industry may evolve from Software as a Service (SaaS) toward Agent as a Service (AaaS), where agents autonomously complete tasks and deliver results rather than merely providing tools for human operation.

These developments reflect Nvidia’s broader ambition to position itself as a full-stack infrastructure provider. Since late 2025, Huang has increasingly referred to a “five-layer” model of the AI industry, comprising energy, chips, infrastructure, models, and applications. The framework appears to serve both as a conceptual simplification of the AI stack and as a strategic justification for Nvidia’s expansion across multiple layers.

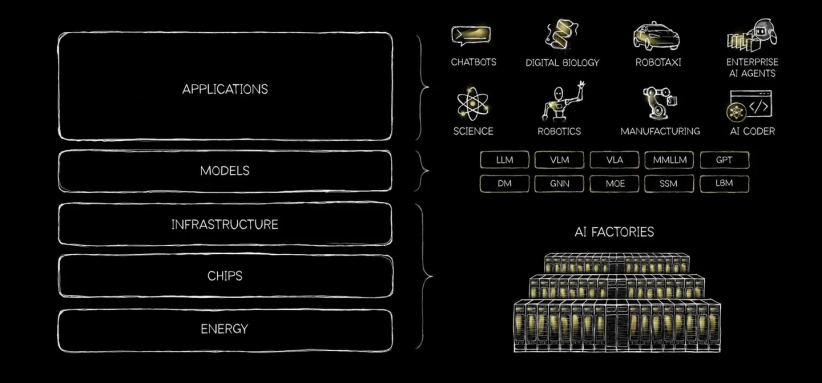

Diagram of the “five-layer cake” model of the AI industry

Source: NVIDIA

Energy supply sits at the base of this five-layer framework. Huang emphasized that power capacity has reportedly become a rigid constraint on AI development, as the available electricity in a given region directly determines the upper limit of deployable computing power. The second layer consists of chips, which dictate the computational performance achievable per unit of energy. The third layer is data center infrastructure, encompassing land, construction, power systems, cooling equipment, and high-speed networking. The fourth layer comprises models, which are fine-tuned for specific application scenarios. At the top level are end-user application services—such as enterprise software, agents, and autonomous driving—marking the final stage where the economic value of AI is realized.

Through this framework, NVIDIA is attempting to reposition itself from a hardware manufacturer to a core facilitator of full-stack AI infrastructure. Huang has suggested that supporting what he characterizes as the largest infrastructure build-out in human history necessitates a fundamental reconfiguration of the entire computing stack. The strategy is described as a blend of vertical integration and horizontal openness: maintaining vertical control over critical components such as chips, systems, and software, while offering open interfaces and 9ecosystems to developers and partners. This narrative is seen as an attempt to embed NVIDIA into every stage of the AI value chain—from energy management to application delivery—to reinforce its position within the global computing infrastructure.

03 Between Vision and Reality: Market Expectations

Internally, NVIDIA’s technical teams emphasize their existing ecosystem advantages while acknowledging challenges in implementation. Ian Buck, Vice President of Hyperscale and HPC at NVIDIA, stated that AI programming has not diminished the relevance of the CUDA architecture; rather, the need for performance optimization has reportedly increased dependence on the underlying platform. Deepu Talla, Vice President of Robotics and Edge AI, noted that while agents represent a turning point for robotics, challenges such as data scarcity and safety in complex environments remain significant, suggesting that large-scale deployment may still be some time away.

Ian Buck

Source: Bloomberg

From a capital market perspective, a tension exists between NVIDIA’s long-term narrative and short-term valuation. While institutions such as JPMorgan Chase, Goldman Sachs, and Bernstein maintain positive ratings, investors appear cautious. By March 25, 2026, the company’s stock had reportedly fallen by over 6% year-to-date. During the GTC conference, the stock experienced a brief surge followed by a decline, closing more than 5% lower on March 20.

From an industry perspective, certain observers remain skeptical of the projected “trillion-dollar demand.” AI inference reportedly imposes more stringent requirements on latency, energy efficiency, and deployment flexibility, which appears to create opportunities for architectural diversity. On the supply side, Maria Sukhareva, Chief AI Strategist at Siemens and founder of the consultancy AI Realist, and reports in the Financial Times have both noted that NVIDIA faces multi-front competitive pressure from firms such as Huawei and Google, which are developing in-house silicon and alternative ecosystems. On the demand side, Chris Smith, a partner at McKinsey & Company, emphasized that while topics such as “Tokenomics” and autonomous agents are increasingly on executive agendas, most initiatives remain in the pilot phase. A comprehensive transition reportedly faces critical bottlenecks concerning capital investment, implementation, and return on investment (ROI).

Overall, the industry appears to have stopped short of uncritically adopting Huang’s narrative. Nevertheless, there is a consensus that his technical and commercial frameworks have exerted significant influence on both industry sentiment and capital expectations. While NVIDIA reportedly maintains its dominant market position via its full-stack platform and ecosystem lock-in, the onset of the inference era appears to be shifting the competitive landscape. The previous paradigm of compute-intensive monopolies is increasingly giving way to an environment defined by efficiency-driven competition and architectural pluralism.

Discussion surrounding the concept of “Tokenomics” and its industry implications.

Source: Financial Times / Efi Chalikopoulou

04 Chinese Companies Gain Visibility

GTC 2026 also featured participation from a number of Chinese firms across AI and robotics, highlighting developments in large models, embodied intelligence, and autonomous driving.

In the large-model sector, Yang Zhilin, founder of Moonshot AI, delivered a keynote speech titled How We Scaled Kimi K2.5. As the only founder of an independent Chinese AI firm to speak on the main stage at GTC 2026, Yang disclosed the technical roadmap behind Kimi K2.5 for the first time.

During the presentation, Yang framed Kimi’s evolution around three key dimensions: token efficiency, long-context capabilities, and agent clusters. He introduced next-generation architectural developments to global developers, most notably the “Attention Residual” technique. This method reportedly allows models to selectively focus on information from preceding layers, which is said to significantly optimize computational efficiency. According to experimental data provided during the session, training efficiency for a 48B-parameter model improved by 1.25 times following these architectural enhancements.

The technique has attracted significant industry attention. In addition to praise from Elon Musk, Jerry Tworek, former Vice President of Research at OpenAI, reportedly characterized the development as marking the era of “Deep Learning 2.0” and suggested that it could become a standard feature for future AI model architectures.

Yang Zhilin delivers a keynote at GTC 2026

Source: TMTPost

In the robotics sector, Wang Xingxing, CEO of Unitree Robotics, delivered a virtual keynote address identifying what he characterized as critical transition points for the embodied AI industry. He highlighted three primary bottlenecks: limited model representation, a critical shortage of real-world data, and the absence of scalable reuse mechanisms for reinforcement learning. Wang expressed a preference for world models and video generation as technical roadmaps, while framing the development of embodied AI as a shared global endeavor.

The Galbot G1, developed by Beijing Galbot Co., Ltd., was featured in Huang’s keynote as a representative real-world application of Chinese-developed embodied AI. The presentation showcased the robot’s deployment at a high-density pharmacy for the US-based medical AI firm PeritasAI. The Galbot G1 reportedly demonstrated the ability to autonomously navigate, identify, and transport surgical instruments—capabilities that are seen as validating its industrial-grade reliability and compatibility with global ecosystems.

Additionally, several unicorn companies showcased their recent developments. In the autonomous driving sector, WeRide (WRD) presented the Robotaxi GXR, which is characterized as a factory-installed mass-produced flagship model. In the domain of embodied AI, Lightwheel AI demonstrated several simulated robot instances, which are seen as providing the simulation training infrastructure required for embodied AI products.

05 Extended Analysis: Three Trends from GTC 2026

Taken together, the signals from GTC 2026 point to a clear shift in the focus of the AI industry. In recent years, competition has largely revolved around model training—parameter scale, training compute, and baseline performance. At this year’s conference, however, attention moved more decisively toward inference efficiency, system deployment, scenario adaptation, and integration with industrial processes.

Nvidia’s announcements went beyond next-generation computing platforms. They also included system software designed for large-scale inference, data solutions for robotics and autonomous driving—often described as “physical AI”—and closer coordination with industrial software providers, cloud platforms, and infrastructure companies. The direction of travel is becoming clearer: AI development is moving away from a training-centric phase toward one defined by deployment and real-world application.

I. Technological Dimension: Inference, Simulation, and Deployment

First, inference is moving to the center of the AI stack. As companies begin to digest the cost of large-scale training, the focus is shifting toward serving large user bases in real time. That, in turn, is making inference the new battleground. Where competition once centered on single-chip performance and training capacity, it is now shifting toward heterogeneous computing, software orchestration, and full-stack integration. In this context, technical strength is increasingly measured not by model size alone, but by inference speed, system stability, resource efficiency, and cost control.

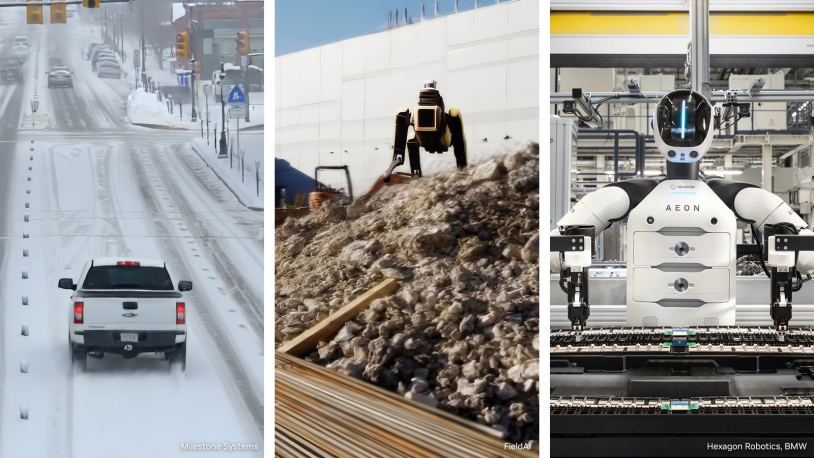

Second, simulation and data generation are becoming more important. At GTC 2026, Nvidia introduced a “physical AI” data factory Blueprint designed to connect data generation, augmentation, and evaluation into a single workflow, while linking simulation more closely with engineering design and manufacturing. The aim is to reduce the time, cost, and complexity of training systems in areas such as robotics, autonomous driving, and visual AI.

This reflects a broader shift in the technical frontier. AI is moving beyond text and code toward perception, control, and interaction with the physical world. In many real-world applications, relying solely on collected data is both costly and insufficient—particularly for long-tail and high-risk scenarios. As a result, simulation environments, synthetic data, and automated evaluation systems are taking on greater importance. Future breakthroughs may depend less on incremental gains in model performance, and more on advances in simulation, data feedback loops, and the ability to validate models in real-world conditions.

NVIDIA launches the open Physical AI data factory Blueprint, intended to accelerate the development of robotics, visual AI agents, and intelligent vehicles

Source: NVIDIA

Third, AI development is becoming more system-driven. GTC 2026 did not treat chips, software, data, and applications as separate domains, but as parts of a single integrated framework. Competition is no longer confined to individual components. It increasingly extends to advanced manufacturing, chip packaging, optical interconnects, power supply, and cooling systems.

For the technology sector, this shift is likely to deepen convergence across algorithms, chips, networks, simulation, control systems, and engineering applications. Judging technological advantage purely on model training capability is becoming less meaningful in explaining how the competitive landscape is evolving.

This shift also points to changes in market structure. Reuters has noted that while Nvidia retains a strong position in training, it faces growing competition in inference from CPUs and customized processors. As AI moves from training to deployment, the technology stack is becoming more diverse. Rather than a single dominant architecture, the field is likely to fragment into multiple approaches shaped by use case, cost, and deployment constraints. In that sense, GTC 2026 is less about showcasing one company’s strengths than about signaling a more complex, application-driven phase of competition.

II. Industrial Dimension: Coordination Across the Value Chain

At GTC 2026, Nvidia placed as much emphasis on ecosystem coordination as on its own products. The company outlined how its technologies are being integrated across industrial software, cloud infrastructure, hardware systems, and downstream applications. According to official materials, companies such as Cadence, Dassault Systèmes, PTC, Siemens, and Synopsys are embedding Nvidia’s capabilities into design, verification, and manufacturing workflows. At the same time, cloud providers including Amazon Web Services, Google Cloud, Microsoft Azure, and Oracle Cloud Infrastructure are providing computing capacity at scale. Hardware vendors such as Dell Technologies, Hewlett Packard Enterprise, and Supermicro are supporting localized and hybrid deployment models.

The broader pattern is clear: AI is moving from isolated software applications toward system-level integration across platforms, processes, and industries.

Jensen Huang and Cadence CEO Anirudh Devgan discuss AI-driven scientific simulation and engineering design during a GTC 2026 breakout session

Source: NVIDIA

At a more macro level, the conference also highlighted how the AI industry is extending into infrastructure. Reuters has reported that Nvidia is raising expectations around inference-driven growth and large-scale deployment, which analysts interpret as a sign that the industry is moving beyond early experimentation.

In parallel, Nvidia has proposed working with energy companies to develop so-called “AI factories”, integrating data center construction, power supply, and compute deployment into a unified framework. This points to a broader shift: competition in AI is no longer limited to models and applications, but increasingly tied to land, electricity, cooling capacity, network infrastructure, and industrial systems. In effect, AI is beginning to reshape how capital is allocated across infrastructure and related sectors.

III. Governance Dimension: The Rise of “Sovereign AI”

At GTC 2026, Jensen Huang reiterated the concept of “Sovereign AI,” a framework he has consistently addressed from a governance perspective. Originally introduced at the World Governments Summit in Dubai in February 2024, the concept suggests that nations should maintain control over the AI systems utilized within their borders. According to Huang, this control should encompass not only technology and products but the entire AI ecosystem, spanning from data to infrastructure. Since its introduction, Huang has reportedly advocated for Sovereign AI across multiple platforms, including the World Economic Forum in Davos and high-level meetings with global heads of state. GTC 2026 included dedicated Sovereign AI Developer Conference Sessions, which reportedly convened political and business leaders from Europe, the Middle East, and Africa to discuss the development agenda for local AI ecosystems.

The concept of “Sovereign AI” addresses the universal anxieties and diverse requirements of sovereign states at various stages of development, appearing to offer implementable AI roadmaps for all nations. For developed economies such as the European Union, Sovereign AI is seen as providing a “middle path” between the competing demands of regulation and industrial development. Throughout its digital evolution, the EU has established stringent requirements for data localization and cross-border flows through the General Data Protection Regulation (GDPR) and the AI Act. However, the rapid advancement of AI in China and the United States has reportedly fueled internal concerns that the local AI industry could be monopolized by American technology giants, leading to a loss of autonomy in the digital economy.

Huang’s Sovereign AI framework is seen as aligning with European requirements. On April 9, 2025, the European Commission released the AI Continent Action Plan to comprehensively enhance the EU’s competitiveness in the AI sector. The plan centers on the construction of five “AI Super Factories” and emphasizes the development of the EU’s own AI infrastructure. Within this context, NVIDIA has reportedly collaborated with firms such as HPE to establish an “AI Factory Lab” in France, specifically designed to create localized computing environments that comply with EU regulatory standards.

For emerging economies such as India and Vietnam, the concept of “Sovereign AI” speaks directly to security-related concerns in their AI development pathways. In many cases, domestic technological ecosystems remain at an early or catch-up stage, with relatively limited capabilities in advanced chip design and large-scale infrastructure. At the same time, these countries are seeking to participate in the expansion of the AI industry, strengthen their digital competitiveness, and maintain greater control over local languages, cultural contexts, and data security.

In this context, “Sovereign AI” may be seen as offering a more immediately deployable pathway for building what is often described as “secure AI” systems.

Following GTC 2026, Viettel—one of Vietnam’s largest telecom operators—announced a collaboration with Nvidia to develop a national Sovereign AI ecosystem. The initiative is expected to be built on Nvidia’s DGX B200 computing platform and the Nemotron model architecture, supporting the development of AI infrastructure within Vietnam.

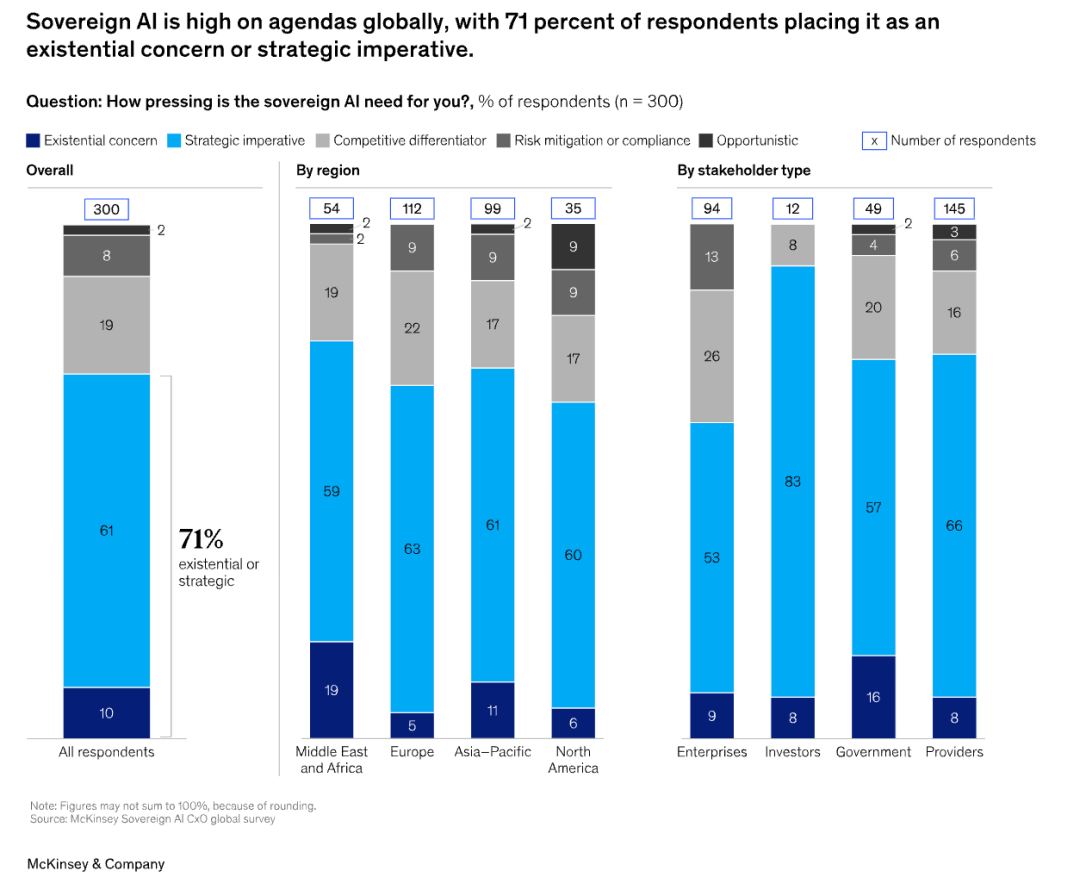

McKinsey forecasts that by 2030, AI spending may be influenced by sovereign requirements, with the market size estimated to reach $500 billion to $600 billion

Source: McKinsey & Company

Of course, the promotion of governance concepts such as “Sovereign AI” by Jensen Huang appears to be underpinned by clear commercial considerations. Estimates by McKinsey & Company suggest that by 2030, between 30% and 40% of global AI spending could be influenced by sovereignty-related requirements, with the associated market potentially reaching $500bn to $600bn. In this context, Huang’s framing of “Sovereign AI” seems to be directed in part at mid-sized powers and emerging economies, where demand for domestic AI infrastructure is growing. The concept may also position Nvidia to capture a share of infrastructure development in these markets.

Nvidia is already expanding its presence through partnerships with companies such as Amazon, reportedly deploying more than one million GPUs worldwide to support related initiatives. Ahead of GTC 2026, the company also announced a collaboration with Palantir Technologies to introduce a Sovereign AI Operating System Reference Architecture (AIOS-RA), intended to provide governments and enterprises with an integrated solution spanning hardware, software, and application deployment.

Still, the future of “Sovereign AI” remains uncertain, and its alignment with NVIDIA’s roadmap is far from guaranteed. Commentary from outlets such as Bloomberg and Forbes has questioned whether such approaches risk deepening dependence on specific vendors, particularly where hardware, software, and infrastructure are tightly integrated. Some analysts argue that if AI development is framed primarily in terms of infrastructure procurement, it could reinforce dependency even as it lowers entry barriers. Much will depend on how countries balance autonomy, capability-building, and external partnerships in practice.

GTC 2026 offers a snapshot of an industry in transition. The center of gravity is shifting—from training to deployment, from scale to efficiency, and from isolated technologies to integrated systems.

Nvidia’s vision is influential, but not uncontested. Industry responses remain mixed, investor sentiment is uneven, and national approaches to governance continue to diverge. What emerges is a more complex landscape, shaped as much by real-world constraints as by technological ambition. Whether the trends highlighted at GTC 2026 translate into sustained gains will depend less on narrative momentum, and more on how these competing forces play out in practice.

References

1.https://www.nvidia.com/gtc/sessions/sovereign-ai/

2. https://www.nvidia.com/gtc/

3. https://blogs.nvidia.com/blog/ai-future-open-and-proprietary/

4. https://futurumgroup.com/insights/has-the-token-economy-arrived-decoding-nvidias-gtc-and-the-future-of-ai/

5. https://www.scmp.com/tech/big-tech/article/3347495/how-china-could-dominate-ai-eras-tokenomics-vast-power-grids-and-low-cost-models

6.https://www.forbes.com/sites/moorinsights/2026/03/26/nvidia-gtc-2026-and-the-ambitious-path-to-1-trillion-in-ai-revenue/

7. https://www.tmtpost.com/7919219.html

8. https://www.ft.com/content/ae8dc056-1613-414b-ba75-c34aba0056f2?syn-25a6b1a6=1

9. https://www.reuters.com/technology/nvidia-ceo-huang-says-countries-must-build-sovereign-ai-infrastructure-2024-02-12/

10. https://www.mobileworldlive.com/ai-cloud/hpe-nvidia-deepen-ties-to-bolster-sovereign-ai/

11.https://www.economist.com/business/2025/07/13/can-nvidia-persuade-governments-to-pay-for-sovereign-ai

12. https://www.reuters.com/business/media-telecom/nvidias-pitch-sovereign-ai-resonates-with-eu-leaders-2025-06-16/

13.https://www.businesswire.com/news/home/20260312795208/en/Palantir-and-NVIDIA-Team-to-Deliver-Sovereign-AI-Operating-System-Reference-Architecture

14.https://en.viehttps://www.ddn.com/resources/success-stories/fpt-ai-factory-powering-sovereign-ai-with-ddn-and-nvidia/

15.https://www.bloomberg.com/opinion/articles/2025-06-18/nvidia-s-sovereign-ai-could-win-a-prize-for-irony

16.https://www.forbes.com/sites/greatspeculations/2026/03/17/is-sovereign-ai-nvidias-secret-weapon/

17. https://en.vietnamplus.vn/viettel-partners-with-nvidia-to-build-sovereign-ai-ecosystem-post339518.vnp

Original link: https://mp.weixin.qq.com/s/QceaRbp8MGQ8oSP9RzqWRg

Authors

Yao Xu,Secretary-General of CGAIG and Associate Professor at FDDI

Xin Yanyan,Deputy Secretary-General of CGAIG and Assistant Research Fellow at FDDI

Zhang Ao,Research Assistant of CGAIG

Zhong Yifei,Research Assistant of CGAIG

Yu Yue,Research Assistant of CGAIG

Yuan Luming,Research Assistant of CGAIG

Wang Yibo,Research Assistant of CGAIG